I have spent the morning playing with the iPhone app Cortex Camera. I am a bit confused but will stick with it.

The app it’s self is very simple, but the results are confusing me. Cortex Camera takes (they say 100 photos via a video capture) many photos, then merges them together into a single result. The output, according to theory, could be cleaner and with more clarity than just snapping one photo.

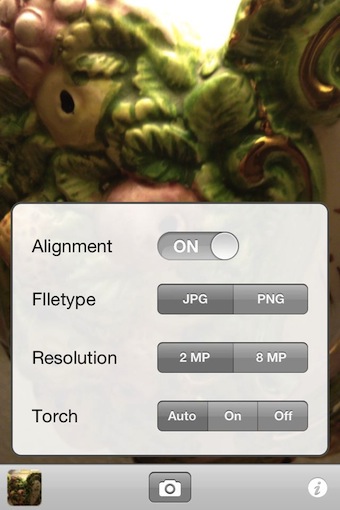

Launching Cortex Camera, you have the option across the bottom for the iPhone’s photo library (view only), the shutter button and the ‘settings’.

The settings in Cortext Camera lets you adjust the megapixels, on a iPad it goes above the power of the camera so the merging of photos is filling in a lot of areas to pixel multiply. PNG is not standard for the iPhone camera and is supposed to be a clearer image result. Alignment is if you have the app merge the photos into one or stack so it shows movement blur.

The photo taking experience involves not moving for about 3 seconds. A progress bar appears on the screen, when the photo was successfully taken it is saved to the iPhone’s photo library. If you move too much or something passes through the photo while be taken, the process will stop and warn you to move less. I have taken photos with Cortext Camera inside, outside, low light, bright light, big area, close up… every result is blurry. I have taken more than two dozen photos in both 2 mp and 8 mp settings, I just can’t come up with the perfect situation that the merging of multi photos into one looks better than just snapping a photo.

To compare, below is two regular iPhone4s photos, first is the regular and the second is the iPhone’s HDR. Notice that Cortex Camera zooms in a bit as well is narrower/taller with the regular iPhone photos as 1060 x 1890.